Abstract

This report offers a non-technical but thorough explanation of “blockchain technology” with a focus on the key variables within consensus mechanism design that differentiate so-called permissioned or closed and permissionless or open blockchains. We describe decentralized computing generally and draw parallels between open blockchain networks, e.g. Bitcoin, Ethereum, and Zcash, and the early Internet. For certain use cases, we explain why open networks may be more worthy of user trust and more capable of ensuring user privacy and security. Our highlighted use cases are electronic cash, digital identity, and the Internet of Things. Electronic cash promises efficient microtransactions, and enhanced financial inclusion; robust digital identity may solve many of our online security woes and streamline commerce and interaction online; and blockchain-driven Internet of Things systems may spur greater security, competition, and an end to walled gardens of non-interoperability for connected devices. We argue that the full benefits of these potential use cases can only be realized by utilizing open blockchain networks.

View our plain-language summary of this report

Article Citation

Peter Van Valkenburgh, "Open Matters: Why Permissionless Blockchains are Essential to the Future of the Internet 1.1," December 2016.

Table of Contents

Executive Summary

You may have heard that “blockchain technology” is the solution to any number of social, economic, organizational or cybersecurity problems. It is not. A blockchain is merely a data structure and “blockchain technology” is a vague and undefined buzzword. In this paper, we explain the true technologies that undergird blockchain networks and the distinctions between open and closed blockchain networks, why they matter, and why only open blockchain networks can solve certain specific issues related to electronic cash, identity, and the Internet of Things.

“Blockchain technology” is not a helpful phrase. It abstracts real, specific technical innovations into a generalized panacea. The phrase suggests a vague design pattern, which is then trumpeted as the solution to all manner of societal and organizational problems. And amongst all of this cheerleading, almost nothing is ever offered in the way of real design specifics. This tends to be because “blockchain technology” is described monolithically, as if there are no specific design choices to be made in building “blockchain solutions” beyond choosing to use a blockchain. The advantages and disadvantages of various approaches and technical architectures are generally not discussed (except perhaps by experts) and the non-technical public is left with a warm blanket and little understanding of why any of this matters.

This report offers specifics. It begins by describing why “decentralized computing” matters. If all of the “blockchain technology” hype has one thing in common, it’s the idea that a computer application, which creates some useful result for its users, can be run simultaneously on many computers around the world rather than on just one central server, and that the network of computers can work together to run the application in a way that avoids trusting the honesty or integrity of any one computer or its administrators. To describe this idea we prefer the term “decentralized computing” to “blockchain technology,” because it is more descriptive and it is also a broader category.

This report demystifies the actual technologies behind “blockchain technology” and explains these several technologies in a way that even non-technical readers will understand. This report creates a typology of “blockchain technologies” and it will suggest that only certain types of “blockchain technology” can be real solutions to certain major social and organizational challenges.

For starters, rather than talking about “blockchain technology” in the abstract, we discuss the real technical innovations that underlie Bitcoin, the actual functioning technology that has spurred all the blockchain hype. There are really three core innovations that underlie Bitcoin: peer-to-peer networking, blockchains, and consensus mechanisms. Of these, peer-to-peer networking is generally nothing new, and blockchains are merely novel ways of storing and validating data. Consensus mechanisms, however, are the truly disruptive, interesting, and critical component of the design. When it comes to capabilities, risks, and disruptive potential, however, not all consensus mechanisms are created equally. The critical nature of consensus mechanisms in these new blockchain-powered decentralized computing systems, and the variability in types of consensus mechanism design are why the bulk of this report focuses on explaining consensus mechanisms to non-technical audiences.

In general, by consensus we simply mean the process by which a number of computers come to agree on some shared set of data and continually record valid changes to that data. So the blockchain might be the form that the data take, e.g. a hashed list of valid transactions in bitcoin, but it is the consensus mechanism that generates that blockchain, validates the data, and continually keeps the data updated and reconciled between all of the computers in the system.

This brings us to the question of “openness” in the consensus mechanism. Who is allowed to read the data over which the network is forming consensus, and possibly more important, who is allowed to participate in the process that ultimately results in new data being added? Are some consensus mechanisms more open to free participation than others? In an open consensus mechanism anyone with a computer and an internet connection should be eligible to play a role in writing consensus data; in a closed consensus mechanism only those who have been identified by a centralized authority and given an authorization credential are allowed to participate.

The operation of various consensus mechanisms is described in the full report. Open consensus mechanisms include proof-of-work based mechanisms, as found in Bitcoin and most cryptocurrencies, as well as proof-of-stake mechanisms and social consensus mechanisms. Closed consensus mechanisms generally follow what we call a consortium consensus model, wherein only identified and credentialed consortium members share the privilege of writing consensus data.

From an innovation policy perspective, open consensus mechanisms are superior to their closed counterparts because they create purpose-agnostic platforms atop which anyone with a connected computer can build, test, and run user-facing decentralized applications. In this sense, networks powered by open consensus mechanisms mirror the early Internet, and may one day become as indispensable as the Internet in facilitating free speech, competition, and innovation in computing services.

Apart from openness, we also discuss the nature of trust, and privacy in each of the several consensus mechanisms. Open consensus mechanisms demand that users place trust in unknown third parties who are economically motivated to behave honestly because they have skin in the game and face competitive pressures. Closed consensus mechanisms demand that users place trust in the identifying authority who provisions consortium members with credentials, and the honesty and cybersecurity practices of the members themselves. Open consensus mechanisms trade transparency for privacy but new technologies such as zero-knowledge proofs and homomorphic encryption may enable open networks to have superior privacy and verifiability as compared with closed networks that rely only on perimeter security to maintain privacy.

Finally, we explain why open consensus mechanisms, specifically, are critical for three particular decentralized computing applications: electronic cash, identity, and the Internet of Things.

- Electronic Cash. Truly electronic cash (i.e. fungible bearer assets, whose use resembles that of paper notes) offers efficiencies that existing electronic money transmission systems cannot. There are hidden costs to legacy systems: chargebacks, and transactions forgone because fees are greater than the value being sent or because participants cannot obtain a banking relationship. Fundamentally, from a user’s perspective, a closed-blockchain money transmission technology doesn’t “just work” from the get-go. I cannot send or receive money until I open an account and establish a legal relationship with a company. This may be a tolerable inconvenience, but it is not a system that works like cash, which can be accepted in the hand without any prior arrangements in place. Only open consensus mechanisms, by fully automating the creation and maintenance of a ledger according to pre-established rules and economic incentives, can offer electronic transactions that are as good as cash.

- Identity. The Internet lacks a native identity layer. This shortcoming is the reason why Internet users must rely on a tapestry of weak passwords, secret questions, and knowledge of mother’s maiden names to verify their identity to various web service providers. The need for a better solution is widely recognized, and by creating a shared and unowned platform for recording identity data, open blockchains may provide the answer.

- The Internet of Things. Firstly, Open blockchain networks allow for a truly decentralized data-structure for device identity (I am a bulb in this home’s kitchen) and user access authorization (the user with address 0xE1A… is the only person who can turn me on and off). The redundant and decentralized nature of data on these networks can ensure that these systems have true longevity, and that a manufacturer’s decision to end support for a product will not destroy the user’s ability to securely access the product’s features.

Secondly, open blockchain networks can help ensure that devices are interoperable and compatible because critical infrastructure for device communication, data storage, and computation can be commoditized and shared over a peer-to-peer network rather than be owned (as a server warehouse is owned) by a device manufacturer that may be reticent to opening its costly platform to competitors.

Lastly, device payments for supporting and maintaining that networked infrastructure or allowing the device’s user to easily engage in online commerce can be made efficient by utilizing the electronic cash systems that only open consensus mechanisms can facilitate.

An open consensus mechanism decentralizes trust, spreading out power on the network across a larger array of participants. For any use-case, this decentralization helps ensure user-sovereignty, interoperability, longevity, fidelity, availability, privacy, and political neutrality. In the full report, the necessity of these attributes are explained in the context of each decentralized computing application (electronic cash, identity, and the Internet of Things), and a discussion of open and closed consensus mechanisms for that application follows.

This executive summary is a highly abridged version of the report. The full version is long because this subject is deep, and non-technical explanations must often be given in a fairly verbose narrative form. If seriously interested in these topics, we implore the reader to curl up in a comfortable chair, and dedicate some time to the full report.

I. The Decentralized Computing Revolution

If all of the “blockchain technology” hype has one thing in common, it’s the idea that a computer application, which creates some useful result for its users, can be run simultaneously on many computers around the world rather than on just one central server, and that the network of computers can work together to run the application in a way that avoids trusting the honesty or integrity of any one computer or its administrators. To describe this idea, we prefer the term “decentralized computing” to “blockchain technology,” because it is more descriptive and it is also a broader category.

A. An Easy Introduction to Decentralized Computing

The easiest way to understand decentralized computing is to begin by thinking about a computer program you use and with which you are comfortable. It could be any computer program that you use for work or for fun. For this example, let’s just pick a word processor. Sure it’s not the most titillating software out there, but pretty much everyone who has ever used a computer has used a word processor at some point in their digital lives.

Let’s think about the history of the word processor. In the old days—the 1990s no less—word processing, like dying, was something you always did alone. If you used Microsoft Word, Wordperfect, or MacWrite, you were running software that used only the processor, memory, disk space, monitor, and keyboard of your personal computer. The word processor was software trapped on an island. If you wanted to share your draft for the next great American novel, then you would either need to print it or save it as a file on a disk and hope your editor, reader, or critic had the same word processing software as you and could open the file on her own island-like computer. If she made edits she would need to send the file back and you would need to merge her changes with any changes you had made since she got a copy. Frustrating, but a real improvement over piles of redlined paper.

Fast forward to the 21st century and new word processing applications began to make collaboration easier, most notably Google Docs and Microsoft Word with OneDrive. These new services took advantage of what marketing executives persuasively and reassuringly dubbed “the cloud.” Word processing via the cloud means it is much easier to work with others in creating a document; in the best implementations you can control who has read or write access, see your co-authors typing in real time, comment and discuss changes, and see a full history of everyone’s edits.

From a computing standpoint this is not cloud magic. What is really happening is that the word processor software is no longer running on your island-like computer; it is running on a server that Google or Microsoft owns and maintains somewhere in a giant warehouse somewhere in the world. The interface that we see on our computers when we use these services is just that, an interface—a way to communicate with the computer that Google or some other cloud services provider owns and controls. Collaboration is a cinch with these systems because every editor can have an interface that talks to the same central computer. The software is still running on an island, but it’s an island that everyone can connect to.

Decentralized computing systems now under development present a new opportunity. Rather than moving the computation from the user’s device to a centralized server in order to facilitate collaborative applications like Google Docs, we could instead replicate the computation across the otherwise island-like computers of all users.

Imagine I’ve got an idea for the next hit young adult novel about dragons, and I have a co-author/by-day-herpetologist who is great at describing the scales, a cold-blooded editor at Penguin who is ready to viciously rip apart our draft, and a family of dragon-enthusiast sons, daughters, nieces and nephews who are the ideal focus group for dragonian feedback. How can we all work together to get this dragon tale off the ground? Rather than all of us connecting to a central server to view and edit the shared draft, we could have all our computers connect to each other in a decentralized web, and our computers could work together to agree upon, and stay in sync with, the latest draft, edits, discussions, and permissions describing who is allowed to edit, comment, or read.

That is decentralized computing: the ability to run applications not on your own island-computer or on someone else’s central computer, but on a truly nebulous cloud computer not owned or controlled by any single party.

Our word processing example has now, however, reached the end of its usefulness. As the PC and the Internet proved, it is not a single application like word processing that forges the value of today’s information superhighway. The value is in the highway itself; a general purpose computing platform, full of cars, buses, vehicles of all types and colors helping people reach all sorts of destinations. As discussed in the next section, the development of these purpose-agnostic platforms is the true decentralized computing revolution at hand.

B. Platforms for Innovation: Computing, Sharing, Trusting

The PC and the Internet were revolutionary not because they were self-contained innovations, but rather because they were platforms for innovation. Decentralized computing tools like Bitcoin and Ethereum, discussed throughout, are the beginnings of a new platform for innovation that promises to facilitate a third wave of computing. The PC gave us home computing and productivity applications; the Internet gave us networked computing, collaboration, and rich audio-visual communication; and decentralized computing will give us tools to enable trust, exchange, and community governance.

The PC enabled a wave of consumer and professional applications, from word processing to gaming, from music production to 3D design. Abruptly, the child of a middle income household had a printing press, a cavernous arcade, a recording studio, a suite of architectural drafting tools and paper, and more at her fingertips in a box that sat inconspicuously in her parent’s home office.

Then the Internet allowed these otherwise isolated productivity tools to be networked, to speak to the world. The PC ran applications, and the Internet enabled those applications to communicate globally, to be multi-user, to share data. Now the home printing press was matched with a fleet of newspaper delivery trucks; the arcade, still cavernous, was open to players across the world who could compete with each other; the recording studio came with a record label, trucks to ship vinyl, and stores to sell hits; the architectural tools came with virtual warehouses of objects, furniture, homes, and vehicles waiting to be built or even printed in 3D.

The Internet created a uniform mechanism for computers to speak to each other, but it did not create a uniform mechanism for verifiable agreement (what we might call “trust”) between two or more computers and their two or more users. As cryptographer Nick Szabo has written:

When we currently use a smartphone or a laptop on a cell network or the Internet, the other end of these interactions typically run on other solo computers, such as web servers. Practically all of these machines have architectures that were designed to be controlled by a single person or a hierarchy of people who know and trust each other. From the point of view of a remote web or app user, these architectures are based on full trust in an unknown “root” administrator, who can control everything that happens on the server: they can read, alter, delete, or block any data on that computer at will.1

We have come to call shared computing tools “cloud computing,” but, marketing aside, there is no cloud, there’s just other people’s computers. So when, today, we engage in any sort of shared computing—whether it be social networking, collaborative document editing, shopping, online banking, or posting a video of our pets—we are utilizing the computers of an intermediary—whether it be Facebook, Google, Amazon, Bank of America, or YouTube respectively. Those intermediaries have control over everything that happens on their servers. They can see a wealth of our personal data and users trust them to only use and manipulate that data according to user instructions and in the best interest of users. Any agreement or level of trust between two users of a given intermediary’s service—as when I sell my car to another eBay user, or recognize the positive eBay feedback and reputation of the prospective buyer—is established and maintained by that intermediary.

This architecture has been essential to the rise of the Internet and collectively we have benefited tremendously from the creation of these shared computing systems. It does, however, introduce a great deal of trust into consumer-business relationships; trust that can be misplaced and abused if an intermediary maliciously misuses their customer’s data, fails to secure it from hackers, or profits unfairly from a user who is locked into the service and finds it difficult to migrate their data to a competing service provider.

New and emerging computing architectures can help forge trustworthy relationships directly between users without intermediaries. The most visible of these new systems thus far is Bitcoin, a peer-to-peer network protocol that allows users to hold and send provably scarce tokens (bitcoins) that can function like cash for the Internet. Electronic cash, however, is just one potential computing service that can be designed to be intermediary-less, to run across the computers of a decentralized network of users rather than on the centralized servers of a particular service provider.

At root, any shared computing system can be thought of as a single shared computer, a computer made up of computers. Bitcoin is, following this logic, a computer made up of many computers whose several users have installed and are running Bitcoin compatible software. Working together, all of these computers periodically come to an agreement over the ledger of all Bitcoin transactions—the Bitcoin blockchain. That ledger is, at any moment, the authoritative “state” of the decentralized Bitcoin computer. But computer “state” can be any data, not just a list of cash-like transactions. For example, when using Microsoft Word, a writer is perpetually updating the state of her computer, typing word after word into a document whose current changes—the current state—continually appear on the screen.

If a decentralized network of computers can continuously agree on the most recent and updated state of all interactions on that network—like keystrokes to a Word document—then it could be programed to perform the computations necessary for any number of applications. Tracking the reputation of sellers and buyers, permissioning editing or access rights to a shared document, rewarding creative contributors for popular video content, any of the previously described “cloud” services provided by intermediaries could be programmed into a decentralized computing network. As Szabo has noted,

Much as pocket calculators pioneered an early era of limited personal computing before the dawn of the general-purpose personal computer, Bitcoin has pioneered the field of trustworthy computing with a partial block chain computer. Bitcoin has implemented a currency in which someone in Zimbabwe can pay somebody in Albania without any dependence on local institutions, and can do a number of other interesting trust-minimized operations, including multiple signature authority. But the limits of Bitcoin’s language and its tiny memory mean it can’t be used for most other fiduciary applications[.]2

Several efforts are underway to design systems that can enable a larger range of “fiduciary” applications, systems that will be effectively general purpose decentralized computers: platforms for trustworthy shared computing just as flexible and repurposable as the PC and the Internet have become. Some of these systems modify or build on top of Bitcoin (Rootstock3 and Blockstack4 among others), others are new standalone network protocols (the largest by value is Ethereum5). Still others are building decentralized computing systems that are closed or permissioned by default (most notably Corda by R3CEV6), in order to allow a pre-specified set of users to agree upon some limited-purpose computation—like validating contracts between banks.

The component parts of these new architectures are generally three-fold: peer-to-peer networking, blockchains, and consensus mechanisms. All three of these concepts are often lumped together under the general and impressive-sounding heading “blockchain technology,” but for clarity this report will deal with each separately and will ultimately focus on the third lump—consensus mechanisms—because it is the architecture of this third component that has the most important implications for building useful and well-functioning decentralized applications.

You can think of these three technologies as follows: peer-to-peer networking is how connected machines communicate with each other, blockchains are the data-structures the connected peers use to store important variables in the shared computation, and the consensus mechanism is the tool to generate the shared and agreed-upon computation itself.

As we will discuss, the architecture of the consensus mechanism is important to consider. Different choices may have different outcomes for users—more or less privacy, more or less choice, more or less costs to participation. Just as the fundamental technical architecture of the PC and the Internet had long term ramifications for the relative fairness, distribution and availability of computing and communication tools, so may choices in the now unfolding architecture of consensus.

As we will explain, all new approaches to decentralized computing—whether closed or open—should be celebrated and allowed to develop relatively unfettered by regulatory or government policy choices much as the Clinton Administration took a light-touch approach to the development of the Internet in the 1990s.7 In order to make those choices, however, policymakers need a basic understanding of how consensus works and what it might help us build.

C. Platforms for Innovation: Open or Closed

A fundamental question in the design of any consensus mechanism is who can participate and how do they participate in order to reach consensus over some shared computation. For many years it was assumed that useful consensus mechanisms could only be developed if the participant computers were identified through channels outside of the decentralized computing system itself.8 In other words, it had been assumed that useful consensus mechanisms could only be designed as closed or permissioned systems: to participate in the decentralized computing system a user would need to either (a) gain physical access to a private underlying network architecture (e.g. an “intranet” rather than the Internet) or (b) obtain an access credential via a cryptographic key exchange with other participants or by utilizing a public key infrastructure.9 Several such closed consensus mechanisms have been, and are continuing to be, developed.10

Closed consensus mechanisms, however, may not be optimal for the development of robust general purpose decentralized computing systems. Access to dedicated network infrastructure and/or public key infrastructure is costly, potentially limiting participation to larger players like businesses. In some cases, these prerequisites are irreconcilable with the desired decentralized computing use case, as when consensus is sought across a peer-to-peer network that allows peers free entry and exit.11 If, as described in the previous section, we believe that some decentralized computing systems should be open platforms for democratic and diverse innovation (as were the PC and the Internet), then a permissioned system seems like a poor choice.

Closed systems may be the smarter choice for limited rather than general purpose decentralized computing tasks, where consensus need not be open to all potential participants and participants can be centrally identified and trusted not to collude against the interests of the group (say when a consortium of banks wants to settle inter-bank loans according to a decentralized ledger).12 Permissionless systems are arguably more difficult to scale,13 to make private,14 or secure than closed systems.15 These, however, are technical challenges that may prove to be fully surmountable.

Much of the current skepticism exhibited by proponents of simpler, closed systems could prove shortsighted. Similar issues of scale and usability clouded early predictions about computing generally. For example, in 1951 Cambridge mathematician Douglas Hartree suggested that “all the calculations that would ever be needed in [the UK] could be done on three digital computers—one in Cambridge, one in Teddington, and one in Manchester. No one else would ever need machines of their own, or would be able to afford to buy them.”16 Similar skepticism stalked the early Internet. For example, in 1998 economist Paul Krugman wrote,

The growth of the Internet will slow drastically, as the flaw in “Metcalfe’s law”–which states that the number of potential connections in a network is proportional to the square of the number of participants–becomes apparent: most people have nothing to say to each other! By 2005 or so, it will become clear that the Internet’s impact on the economy has been no greater than the fax machine’s.17

The development of the Internet defied many such skeptics. Before we discuss exactly how open and closed consensus mechanisms work, it’s important to understand how the internet was and is itself open, and how that openness proved essential to its success.

D. The Internet and Permission

The Internet is revolutionary in large part because it avoids the costs of permissioning described above. The underlying protocols that power the Internet—TCP/IP (the Transmission Control Protocol and the Internet Protocol)—are open technical specifications.18 Think of them like human languages; anyone is free to learn them, and if you learn a language well you can write anything in that language and share it: books, magazines, movie scripts, political speeches, and more. Importantly, you never need to seek permission from the Institut Français or the Agenzia Italiana to build these higher level creations on top of the lower level languages. Indeed, no one can stop you from learning and using a language.

When Tim Berners Lee had the idea of sending virtual pages filled with styled text, images, and interactive links over TCP/IP (i.e. when he invented the Word Wide Web),19 there was no central authority he needed to approve the project. He could write the standards and protocols for displaying websites—the higher level internet protocol known as HTTP (the Hypertext Transfer Protocol), and anyone with a TCP/IP capable server or client could run freely available HTTP-based software (web-browsers and web-servers) to read or publish these new rich web pages.20 As a result, the Internet went from a primarily command-line text-only interface to a virtual magazine full of pleasantly styled pages full of text, pictures, and links to other related pages, and it made the transition without any formal body approving the change. Every Internet user was free to opt-in or opt-out of the new format, the World Wide Web, as they so desired simply by choosing whether or not to read and write internet data with the new higher level protocol, HTTP.

Today, thanks to the open, permissionless architecture of TCP/IP and higher level protocols built on top of it, no one needs to gain access to a private network in order to create a blog or send an email. Nor must an Internet user obtain a certificate of identity to participate in online discussions. Nor must a hardware designer obtain permission to build a new gadget that can send and receive data from the Internet.21 This openness has been a major factor in democratizing communications, and spurring vibrant competition and innovation. Anyone can design, build, and utilize hardware or software that will automatically connect to the Internet without seeking permission from a network gatekeeper, a national government, or a competitor.

It is true that businesses often utilize public key infrastructure online, and that this does add a layer of permissioning to the web. When you visit an online bank, for example, your web browser will look for a signed certificate issued by a certificate authority that has vouched for the Bank’s online identity.22 This begins a process between your browser and the bank that will ultimately encrypt all of your communications while you are navigating the website. This process is known as TLS/SSL (Transport Layer Security and its predecessor, Secure Sockets Layer), and it is the system behind the little green lock consumers are told to watch out for when visiting sensitive websites like banks.23

TLS/SSL, however, is another application-layer Internet protocol—like HTTP—that runs on top of the open TCP/IP network. Again, the underlying protocols are the reason for the Internet’s openness. When a consumer device is connected to the Internet these protocols do not ask for identification, certificates, or authentication; they simply assign the new device a seemingly random but unique pseudonym (called an IP Address) in order to have a consistent address for routing data.24 The identified and permissioned layer, TLS/SSL, is running on top of the open and pseudonymous layer.

The layered design of the Internet is not accidental. It is modular, with an open lower layer, in order to enable flexibility. One can always build identified and permissioned layers on top of a permissionless system—as TLS/SSL (a closed, identified layer) is built on top of TCP/IP (an open, pseudonymous layer). The reverse is not possible, however. Had the Internet originally been architected to be permissioned and identified, it would have imposed costs and limitations on open public participation, and it would have ossified the possible range and diversity of future higher level protocols for identity and permission. When lower layers are permissionless and pseudonymous, on the other hand, the costs of participating are low (merely the cost of hardware and free Internet-protocol-ready software), and such an open platform enables a variety of closed or identified higher level layers to emerge and compete for particular use cases where identity and permissioning are essential. For example, PGP and the Web of Trust compete with TLS/SSL as methods for enabling secure and identified communications built on top of TCP/IP.

We are still in the very early days of decentralized computing systems, and there remains much uncertainty over which protocols and systems will come to dominate the space. Given that uncertainty, it is possible that these systems will not follow the evolution of the Internet or the PC and instead be permissioned by default at the lower level. The key takeaway from a policy perspective, however, should be (1) awareness of the technological features that enabled the Internet to flourish as a democratic and innovative medium—modularity, openness, and pseudonymity, and (2) a willingness to allow these new decentralized computing systems to evolve similarly unencumbered even when openness and pseudonymity cause regulatory confusion or concern because of their newness and sharp contrast with legacy systems.

II. Making Sense of Consensus

It’s easy to be excited about the applications that can be built on top of decentralized computing platforms. They usually have an easy and provocative elevator pitch: this app will let you send money instantly, and this app will save you from creating and remembering hundreds of passwords! Talking about the infrastructure that powers and enables those apps, however, is harder because the discussion will often be laden with technical jargon and the purpose of the system will be more abstract (i.e., to create a platform for applications that have human-facing purposes).

These underlying architectures, however, have real ramifications for consumer protection and freedom of choice, so it’s important that policymakers and concerned citizens understand the various models that are being developed. Just as it can be daunting to learn about internal combustion or gene sequencing, we understand that knowledge of these topics is key to forming good policy for car safety or GMO foods. Similarly, policy aimed at regulating the application level of decentralized computing (e.g. money transmission, identity provision, consumer device privacy) should be informed by knowledge of the underlying infrastructures. This section will explain those technologies in general, but first a disclaimer.

This is not a document intended for technologists, and many of the salient features of these mechanisms will be spoken of in the abstract. Just as one can explain the principles behind internal combustion engines without discussing the acceptable tolerances in the machining of a piston and gudgeon pin, we will attempt to give an accurate general description of decentralized consensus while avoiding discussion of the merits of sharding or SHA-256.

Speaking generally, the goal of a consensus mechanism is to help several networked participant computers come to an agreement over (1) some set of data, (2) modifications to or computations with that data, and (3) the rules that govern that data storage and computation.

To use Bitcoin as an example, the network of Bitcoin users run software with an in-built consensus mechanism. This consensus mechanism helps all of the peers on the network (Bitcoin users):

- Store agreed-upon data: every peer gets a copy of the full ledger of all bitcoin transactions in the history of the network.

- Compute and transform that data: recipients of bitcoin transactions can write new transactions thus adding to the ledger all transactions.

- Agree on rules for how storage and computation of that data can take place: the ledger is continually updated because all peers listen for and relay new transactions if they are valid, and a lottery is used to periodically pick a random peer to state the authoritative order of valid transactions for chunks of time that are about 10 minutes long. (There are other rules but these are probably the most general and fundamental bitcoin consensus rules).

If this example is not entirely clear, that’s OK. We will expand upon it later in this report. The key thing to remember is that consensus means that a network of peers can agree upon three things: (1) data, (2) computation (transformation of the data), and (3) the rules for how computation can take place.

Any particular consensus mechanism can be designed to leverage two techniques in order to ensure agreement over a computation and the associated data.

First, there are what we can call automatic rules. To use an automatic rule, all parties to the consensus can run software on their computers that automatically rejects certain “invalid” computational operations or outcomes on sight. To make a legal analogy, we can think of this as res ipsa loquitur (the principle that the mere occurrence of an accident implies negligence), or a rule of strict liability.

For example, Bitcoin’s core software defines certain outcomes as always impermissible on sight. Most notably, transactions from one user to another cannot send any bitcoins that have not previously been sent to the sender.25 More simply: I can’t hand you cash that hasn’t previously been given to me. To be compatible with the larger Bitcoin network, the software you run on your computer must follow this rule. If it does not, other nodes on the network will ignore any invalid messages you send using it. You can try to send the network messages that attempt such counterfeiting, but your messages will always fall on deaf ears and the effort will be futile. These are automatic rules that help the network ignore data that is irrelevant or malevolent to the agreement the participants are seeking.

Second, there are what we can call decision rules. In situations where there are two differing outcomes from the computation, but where both would be valid based on the automatic rules, a rule of decision between each possible valid state is needed in order to keep the network in agreement. All parties to the consensus can agree in advance (by choosing which software to run) to always honor one possible valid outcome over another possible valid outcome based on a decision rule. From a legal perspective this is more like a judgement of fact from a jury at trial.

For example, Bitcoin’s core software does not tell you when any particular valid transaction comes before another valid transaction in the order-keeping ledger of all historic transactions. This order is, nonetheless, critical to determine who paid who first. Instead of using an automatic rule to settle uncertainties regarding transaction order, Bitcoin’s software specifies a decision rule to resolve debates over which valid transaction came first.26 Specifically, the Bitcoin software calls for a repeated leader election by proof-of-work, which we will discuss in a moment while outlining proof-of work consensus. For now, it’s important to simply understand that there are various ways of establishing a decision rule in order to reach consensus over the authoritative state of a decentralized computing system when multiple valid states are possible. All currently employed methods fall into four broad categories: (A) proof-of-work, (B) proof-of-stake, (C) consortium consensus, and (D) social consensus.

A. Proof-of-Work

As just mentioned, Bitcoin employs a proof-of-work leader election as the decision rule for determining the order of valid transactions in the blockchain. Such a consensus method might be useful for various decentralized computing systems, but Bitcoin allows us to describe a working example. Leader election means that one participant’s record of which transactions came first, second, third, etc., will be selected by all other network participants as the authoritative order of transactions for some designated period of time (beginning with that participant’s successful election as leader and ending with the next leader election). We can see how this is a rule of decision, it says essentially: whenever there is disagreement over two alternative but valid outcomes, defer to the chosen leader’s choice for the given period.

Proof-of-work is the specific method found in the Bitcoin protocol that describes how a leader is periodically chosen.27 The proof-of-work system is essential to keeping the consensus mechanism open. This “election” is, therefore, not anything like the democratic political process to which we are accustomed. After all, if users come and go, freely connecting to the open network without identifying themselves, how would we ever keep track of who is who, or who is trustworthy and deserves our vote? So instead of having a vote, the network holds a lottery where there will be a random drawing and a winner every so often (roughly every 10 minutes for Bitcoin and every 12 seconds for Ethereum).28

The term leader election is the correct computer science term for this architecture,29 but for the rest of us that sounds like something that involves voting and majorities rather than probabilities and lotteries. For clarity we will use the term leader lottery from here onwards.

Selecting a periodic leader via lottery in the real world would be easier than finding one on a peer-to-peer network. We could all meet in a room, introduce ourselves, and make it real simple by having everyone put their names in a hat and have one blindfolded person pull out a winner.

That simplicity doesn’t work online. If all our peers on the network are putting names in a digital hat, we have no idea if each digital name matches one-to-one with a real person.30 We could reasonably expect some less-than-scrupulous individuals to make up a bunch of random fake names and stick them in the hat. In the digital world we’d have no way of knowing whether Alice, Beth, Chuck, Dana, and Eve are each real individuals or merely pseudonyms (i.e., “sock puppets”) made up by Alice in order have a better chance at winning the lottery. We could try to employ some digital identity system to stop that fraud, but then we would be relying on an external identifier to guarantee the fairness of the system, and that defeats the point of having an open, ungated system to begin with. It would make it costly to participate because you would need to get identified in the real world to do your computing on the decentralized network, and it would force everyone to place trust in the identifier.

Rather than identify all lottery participants and pick names from a hat, we could have a ticket-based lottery, like Powerball. These lotteries only work if the lottery tickets have a cost (if they were free how many tickets to the Powerball would you claim for yourself?). A proof-of-work consensus system merely seeks to make it costly to enter yourself in the lottery. So Alice could still have more than one chance to win, but she incurs real costs every time she buys a new chance.

This has two desirable consequences that help make the lottery a good tool for selecting periodic leaders in a consensus mechanism. (1) Decentralization: It would be prohibitively costly to amass enough tickets to ensure that you would be the periodic leader for many repeated periods. (2) Skin-in-the-game: Leaders tend to be participants who have made sizable investments in the system by buying costly tickets. Generally speaking, the first reduces the capacity for self-dealing (always putting your transactions first), and the second ensures that the costs of malfeasance are internalized by the participants (who have invested real capital in the long term success of the platform).

But how do we make those tickets costly when there is no central authority to verify payment? A proof-of-work consensus mechanism imposes costs on participants by making every ticket costly as measured in computing power that provably performs some “work,” hence the name proof-of-work. Effectively, every lottery ticket costs one attempt at solving a difficult math problem that can only be solved with guess-and-check.

Think of the Bitcoin lottery ticket as a Sudoku puzzle. To win you need to solve a math puzzle that is difficult (guessing and checking numbers that make rows and columns sum up correctly), but easy for others to check if you have solved it (just sum up the rows and columns). Participants in the network previously agree (with an automatic rule) that the winner of every periodic leader lottery will be the person who first solves the math problem. Ultimately, finding a solution comes down to a lucky guess, but you can make more guesses faster if you have more powerful computers. Because, like Sudoku, it is easy to check someone else’s solution, all participants will discover quickly if someone has cracked it, and they will move on to solving the next problem so they can be the leader in the next period.

You might be wondering… who is setting these problems up?! How is there not an all-powerful algebra teacher controlling Bitcoin? There isn’t, because Bitcoin uses an open ended problem that is specified using only publicly available information found in the Bitcoin protocol software. To extend our classroom metaphor, imagine that the problem on the blackboard is this: flip a coin heads up 20 times in a row—a completely open-ended problem. First, we students all agree the problem on the blackboard is the problem we are all competing to solve (an automatic rule), and then once we get flipping, we can all agree if someone does it. Then, once someone “wins,” that person is the leader, and we can begin flipping coins again to determine the next leader. We never need a teacher or central authority to present the next problem, we just go ahead and compute the same problem. It’s difficult to get less metaphorical or more specific than that without discussing cryptographic functions, something we would like to avoid in this general overview.31

What is important to take away from this discussion is that participants enter the lottery by guessing solutions to a publicly posted math problem with their computers, and that more computing power will mean more guesses (more coin flips), which means more chances to win. Because computing power is expensive (both in terms of buying computer hardware, and using electricity to power computing cycles on that hardware) every additional lottery ticket has a cost to the participant.

But if lottery tickets in this leader lottery are costly, then why even participate? After all, the prize for winning would be the right to provide what is effectively a public good: offering an authoritative list of valid transactions on the network for a period of time. This could provide the winner with some benefits (such as ensuring that her own transactions get included in the ledger) but most of the benefits go to the other network participants who get to use an open ledger. So, proof-of-work systems also generally provide a cash reward (in the form of the tokens native to the network) to the holder of a winning ticket, usually called the mining reward. This reward can be any fees that were voluntarily appended to transactions by senders on the network (in order to make their transactions more appealing for an elected leader to include in the section of the ledger she is writing), as well as permission within the software’s automatic rules to create new money by sending herself a transaction with no source of funds (socializing the cost of a reward through inflation).32

Bitcoin users who decide to participate in this leader lottery have come to be called Miners because they perform “work” in return for newly created value. The label, however, belies the larger role these participants play in generating and maintaining consensus across the decentralized computing system. Both the work and the reward are secondary technical features necessary to the creation of a decentralized mechanism for picking periodic leaders who can ensure that data discrepancies between participants are quickly and fairly resolved.

Without a reward baked into the conesus mechanism, it is hard to understand why users would be incentivized to participate honestly in maintaining the network. Much fuss has been made over developing a “blockchain without the bitcoin,” as if the currency-aspect of the network pollutes what would otherwise be a useful network technology with an ideology or political agenda (or, at the very, least creates too many regulatory complications to be worth the trouble). But, as we can see, the only way to maintain an open network where leaders need to be periodically selected and rewarded for their participation is to award them with tokens that are native to the network itself (i.e., the transaction history and scarcity of the token are a part of the data over which the consensus network is continually coming to an agreement). If participants are rewarded with assets that exist only according to data structures outside the network (e.g. dollars or yen, the balances and scarcity of which are described in the balance sheets of banks) then we’ve reintroduced the need for identified parties who must be trusted to perform the rewarding function honestly and without bias.

Open blockchain networks need scarce tokens for technical reasons, not (merely) because their proponents may have political or ideological motivations for supporting alternative currencies. Ethereum, for example, is an open-consensus-driven decentralized computing network that aspires to provide several user-applications aside from electronic cash (e.g. identity management,33 reputation accounting,34 community governance,35 etc.), but it still has a scarce token that rewards winning participants in the leader lottery: ether. A blockchain without bitcoin or similarly scarce token is a closed network, essentially a shared database with pre-identified and authenticated users.

To recap, an open consensus method should allow anyone to participate without obtaining some sort of credential from an external identifier. Without identification, however, a user could pretend to be several users and gain an unfair advantage in the leader lottery used to reach agreement when there are disputes over two or more valid outcomes (like alternative orders of transactions in a ledger). To deal with this problem, participation in the leader lottery is made costly by demanding that participants solve difficult math equations that will require costly hardware and electricity—proof-of-work. As a result, it (A) becomes too expensive to dominate the lottery by obtaining a substantial number of tickets, and (B) ensures that lottery winners are invested in the long term success of the decentralized computing system. Winning participants are, in turn, rewarded with a scarce token native to the network.

B. Proof-of-Stake

Now that we have an intuitive understanding of proof-of-work consensus, it is fairly simple to explain the general mechanism behind proof-of-stake consensus. Recall that the goal behind proof-of-work is to make participation in the consensus costly. If the consensus mechanism involves a leader lottery, then we employ proof-of-work to make buying up all the lottery tickets prohibitively expensive.

Proof-of-stake systems are also designed to make participation come at the cost of some provable sacrifice. Instead of requiring calculation in exchange for a lottery ticket, a proof-of-stake mechanism requires that participants prove that they hold and/or can temporarily forgo access to a valuable token that travels on the network.36 So if Bitcoin was a proof-of-stake based cryptocurrency, then participation in the lottery could require users to stake some of the bitcoins they control—to prove that they control or to sacrifice their control over those valuable funds. The mechanism could demand that participation requires merely a mathematical proof that the user has possession of these tokens on the blockchain, or it could demand the permanent relinquishment or even destruction of these token (something often referred to as “proof-of-burn”37), or it could be a temporary stake, effectively a bond (e.g. I stake 50 bitcoins—and thereby relinquish my ability to spend them—for the next 150 cycles of the leader lottery at which point I will regain control over the coins and can decide whether to stake again in the future). Regardless of how exactly it is specified, the goal is to use the value of the tokens (rather than the cost of computing) as the provable signal necessary for participation in the leader lottery.

If the tokens that travel on this decentralized network are available for sale on a variety of competitive exchanges (whether in exchange for dollars, euros, or other cryptocurrencies) or can be obtained by free transfer from existing users (whether as a gift or in payment for labor or some valuable good) then anyone with sufficient economic resources can, in theory, join the consensus, because they can obtain the tokens necessary to offer a proof-of-stake. In this sense, proof-of-stake consensus methods are, like proof-of-work methods, open.

C. Consortium Consensus

Consortium systems have a simpler solution to making lottery-style elections fair: only allow identified parties to participate. If we decide to trust an outside authority to identify all consortium members, provisioning members with cryptographic keys which they can use to sign their communications and prove authenticity, then we can run software that would only grant lottery tickets to participants who send validly signed messages.38 We know Alice, Beth, Chuck, Dana, and Eve are each real individuals because we previously provisioned them each with secret keys and to obtain a lottery ticket each signs a message with his or her unique key.

This consortium method avoids the costs of solving math problems or staking valuable tokens that is inherent in proof-of-work and proof-of-stake systems.39 The consortium method, however, also reintroduces permission and trust into the decentralized computing system. We need to be identified and granted access to the network in order to participate and we need to trust that the party tasked with making these identifications is acting fairly.

D. Social Consensus

Finally, we come to the last general category of consensus mechanisms, social consensus. You can think of the social consensus mechanism as somewhere in between the fully identified and permissioned consortium model, and the fully pseudonymous and open proof-of-work and proof-of-stake models.

Like the consortium model, you choose to trust some identified participants rather than relying on pseudonymous participants who offer a costly signal of credibility. Unlike the consortium model, however, each individual is her own identifying authority; she can choose which counterparties she trusts and build a social network of those with whom she feels comfortable entrusting the role of writing new data to the blockchain (or agreeing on some computation generally). We might then expect various users with differing social networks to disagree over the authoritative state of the consensus data, but the network can be designed to come to global agreement by looking for a sub-set of all transaction or computation data that some minimum number of trusted participants (perhaps a majority or a supermajority of trusted participants on the network) have agreed upon.40

As with proof-of-work and proof-of-stake consensus mechanisms, a social consensus mechanism will generally be open. Anyone can join but they must be selected as trustworthy by some minimum number of participants before they can participate in full.

III. Openness, Trust, and Privacy Across Various Consensus Models

We’ve spent a good deal of time outlining these various consensus models because the specifics of their architecture will inevitably have meaningful consequences for the applications that are built on top of them, and, by extension, the people who will use those applications. One does not simply procure some “blockchain technology” to build better digital identity systems, property registries, voting infrastructure, or any of the other ambitious killer apps that have been proposed and widely touted for this technology. Building any of those applications will require either (A) the modification and use of an existing consensus network (e.g. build the application on top of Bitcoin or Ethereum) or (B) the creation of a new consensus network (both the development of consensus software and the bootstrapping of a network of peers who run the software that generates the consensus). The choice of whether to use one of the existing open (i.e. proof-of-work, proof-of-stake, or social consensus) networks, to create a new open network, or to design and implement a closed consensus network will be a choice that affects the relative openness of the application, the degree of trust that users must place in other users or maintainers of the application or the underlying network, and the degree of privacy that the application is capable of offering its users. Each of these key consensus mechanism attributes, openness, trust, and privacy will now be discussed in turn.

A. Openness Across Consensus Mechanisms

Speaking generally, open-consensus-driven decentralized computing systems are exciting and disruptive because their openness resembles the early Internet. As we described previously, the Internet became the vibrant ecosystem we know today largely because it is so easy to build hardware or software that can seamlessly integrate with TCP/IP, the lower level networking protocol (language) that powers the network. That lower level is pseudonymous. Devices connect to the network and are automatically assigned a seemingly random number rather than a real-world identity.41 The lower level is permissionless. Devices can send or receive data to and from any other pseudonym so long as the messages conform to the protocol specification.42 The lower level is general purpose and extensible. TCP/IP only describes how packets of data should move through the network. It does not dictate what the contents of those packets can or should be.43 Higher level protocols can be built on top of TCP/IP to interpret sent data as web pages, links, videos, emails, SWIFT bank messages,44 anything that can be imagined, invented, and digitized.

The similarity of TCP/IP to Bitcoin, Ethereum, or any other open blockchain network should be apparent. These systems are also pseudonymous. Users are assigned random but unique cryptographic addresses.45 These systems are also permissionless. Users can read or write data to the blockchain at will, sending or receiving transactions without seeking the permission of any centralized party. And these systems are also general purpose and extensible. Several parties are building new applications and application-layers on top of the bitcoin network,46 and Ethereum is explicitly designed to be a flexible foundation for building any trust-minimized application.47

In the previous section we classified four types of consensus mechanism into two groups:

- Open: Proof-of-work, Proof-of-stake, Social Consensus

- Closed: Consortium Consensus

Decentralized computing systems built using open consensus mechanisms will, in general, be available to any participants who have an internet-connected device and free software that is compatible with the network. Systems built using a closed consensus mechanism will, in general, only be available to participants who have previously identified themselves offline and been granted some form of credential by the identifying authority, which they can use to authenticate their identity whenever they connect to the network.

This characterization of openness lacks, however, an important nuance. There are basically only two things that any user or potential user might want to do with a decentralized computing network: (1) write data to the network and have it included in the consensus-derived data structure or blockchain, or (2) read data from that network’s consensus-derived data structure. Accordingly, a Bitcoin user making a transaction is writing new data to the bitcoin blockchain while a user who queries their balance to confirm payment receipt is reading data from the blockchain.

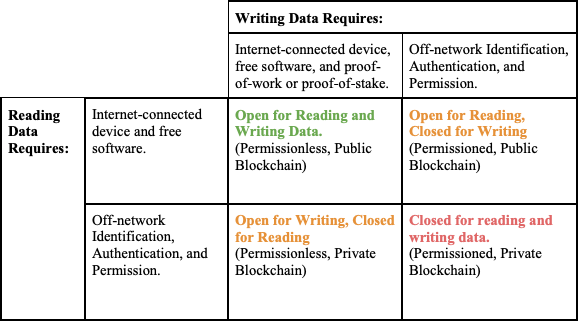

Some have characterized networks where users can freely write consensus data as “permissionless.” That is in contrast to “permissioned” networks where users need off-network identification and authentication in order to write. Read access is then characterized as public (anyone can read consensus data) vs. private (only identified and authenticated participants can read consensus data). These terms, however, can be confusing so we will stick to open and closed irrespective of whether the particular activity involved is reading or writing data. For clarity, however, we can summarize this more nuance characterization with a four-by-four matrix:

Note an important subtlety in this chart. Open for reading is characterized as requiring only that the reader have an Internet-connected device and free software, while open for writing requires those things but also a proof, either -of-work or -of-stake. Bitcoin and Ethereum both exhibit this form of read/write openness. Anyone with an internet-connected device and free software can connect to these networks and download the full set of consensus data, e.g. the blockchain or list of all valid transactions made on the network from its start. Writing new data to these networks is not quite as easy. If one wants to truly be the node on the network that adds new data to the blockchain, one will have to be selected in the leader elections described in the previous section.48 So, to truly write new data on these networks one must provide a proof (of computer work or of stake in the network’s native token) and then be selected in the network’s leader lottery. Even then, however, the user will only truly write data to the blockchain for those periods in which she has been chosen as leader.

This, however, is an overly pedantic description of who may write data on these networks. Thousands of people do write data to these open blockchain networks without ever running a node that makes a proof, i.e. mining. This is because anyone can send a new transaction message to various peers on the network and reasonably expect that the transaction will be picked up by a proof-making-node, i.e. a miner, who will then incorporate it into a block of transactions which will then be added to the blockchain when that miner wins the leader-lottery for a given period. Non-mining peers who want to ensure that their transaction will be written to the blockchain quickly can attach a fee to that transaction which will reward the miner who wins the leader lottery and is the first to incorporate the transaction in the blockchain.49

Relying on these proof-making nodes to write data may seem like a kind of permissioning, and it is true that any particular user who is chosen in the leader-lottery can, for that period, decide which new data will and which new data will not be written to the blockchain. Taking bitcoin for example, it is true that for the duration that a miner wins the leader lottery, she can censor or block any other user from transacting.

There are two factors that make these systems permissionless in spite of the power of miners or proof-makers to block or screen write-access: self-interest among competing proof-makers, and ignorance of the data that enters the blockchain.

Self-interest. If a user wants to ensure that her transaction will be added to an open blockchain, she can append a fee to the transaction.50 Miners or proof-makers on the network compete with each other for the block rewards that come with winning the leader lottery. Block rewards are comprised of any fees that were appended to transactions as well as any new money being created through programmed inflation. It is with these block rewards that miners can finance the expensive hardware and electricity necessary to perform competitive proof-of-work calculations or justify the costly sacrifice of tokens necessary in making a proof-of-stake. Blocking transactions will reduce the fee-revenue component of the block reward, leaving censorship-favoring proof-makers at a competitive disadvantage. Therefore it goes against the self-interest of proof-makers to selectively censor (i.e. permission) the network. Additionally, to the extent that a network is famed for being censorship resistant, e.g. Bitcoin,51 negative publicity from a proof-maker’s decision to censor transactions may erode faith in the network as a whole. This could cause the market price of the network’s tokens to fall, thereby reducing the real value of the proof-maker’s returns and/or motivating the community to enforce anti-censorship norms by shaming the offending proof-maker.

Ignorance. Proof-makers may not have very much information about the data they are writing to the chain. In other words, the proof-maker may know that a particular transaction is valid (because the digital signatures are valid and the sending address is appropriately funded) but she may have no way of knowing who the real-world sender or recipient in the transaction could be. As we will discuss in the section on privacy,52 new technologies such as zero-knowledge proofs, could ensure that proof-makers as well as the public can gain effectively no information from the blockchain aside from a proof that all transactions are valid according to the consensus rules of the protocol. In this situation, proof-making or mining become an activity divorced from any sort of off-network or personal decision making, people simply run machines that always add data to the blockchain if it is valid according to the rules of the protocol and are never in a position to discriminate against users for any other reason.

It’s simply not necessary to go into this highly nuanced analysis when it comes to consortium-based consensus mechanisms. By definition, these systems will be permissioned at the write-level because only previously identified participants can participate in the consensus. A choice could then be made by the designers of the system, to make read access to the results of that consensus public or private.

B. Trust Across Consensus Mechanisms

Early decentralized computing systems, like Bitcoin, are designed for serious uses. These networks custody people’s valuables, help them move their money.53 These networks may soon keep track of their user’s identity credentials,54 and eventually even—in the case of the Internet of Things—help them control their door locks, their baby monitors, their cars and their homes.55

A fundamental design goal of these systems is to decentralize control over the network such that a user will not need to trust a bank-like company’s honesty in order to safeguard her money,56 or trust a technology company in order to safeguard access to her smarthome devices.57 Who or what do you trust to guarantee these systems if not a reputable intermediary, and how does that model of trust change depending on the type of consensus mechanism employed in the system’s design? These are the questions addressed in this subsection.

To start, any discussion of trust must deal with three essential sub-topics:

- Software: Every system described in this report is built from software, and the auditability of that software, as well as the nature of the process of writing that software is the first concern we should have when we ask ourselves: can I trust this system?

- Consensus: The software describes what we have called automatic rules and decision rules. The administration of these rules and the creation of consensus amongst the participants of the system is our second concern with respect to trust.

- Purpose: “Trust” or “trustworthiness” is not a monolithic whole. The parties to the system may demand varying requirements from the system: a system to operate an office sports betting pool may not need to be as trustworthy as a system for executing interest-rate swaps among banks. Additionally, the parties to the system may have a good reason to put faith in their fellow participants, and therefore they may not need a system designed to fully supplant trust in one’s counterparties.

i. Trust in Software

As a first pass, it is important to recall that much of the agreement between participants in these systems is established by what we called automatic rules that are specified in the software. Additionally, we must remember that decision rules will also always be described in the software, even if the decision-making process is then carried out by network participants (whether through proof-of-work, proof-of-stake, consortium, or social consensus means). The software is therefore, to make another legal analogy, the constitutional law of the network; it describes the process by which all subsidiary legal structures should and will ultimately function. The software is always the first element of the system that we must consider when judging the system’s relative trustworthiness.

As a general rule, open source software (i.e. software whose source code can be viewed and audited by any and all interested parties free of any need to seek a copyright license or permission from a patent holder) may be preferable in the context of decentralized systems.58 Software design provides an opportunity for developer transparency, an opportunity for a developer or group of developers to put their cards on the table and show with precision what it is they are building. It also subjects that design to an unbounded set of potential security auditors who may detect innocent mistakes as well as malicious backdoors.59 Without visibility into the software we are putting a good deal of faith in the person selling us that software or advocating for its use. Closed source software, also referred to as proprietary software, may be superior for various applications (e.g. a word processor, or a game), but for decentralized applications that we intend to trust with our money, reputation, identity, or any other valuable agreement between users, closed source software creates real risks. To extend our legal metaphor, a closed source consensus protocol is not unlike a constitution that no one in the country is allowed to read without seeking permission from the drafter or central government.

To give a real world example, imagine if someone decided to create an alternative to Bitcoin by copying and modifying the Bitcoin software. What if this person changed the automatic rule that requires all transactions to be funded by prior transactions, to a rule stating that one particular pseudonymous participant would be allowed to send transactions out of thin air. If we are going to use this bizarro-Bitcoin as a shared currency, we would certainly want to know that this change to the software’s automatic consensus rules has been made. Our new bizarro-Bitcoin network is now allowing one special user to print money to her heart’s content. If we have no way to freely read and audit that code (or to rely on a diverse range of third-party validators to do that audit independent of the software author) then we have no reason to trust the network it creates or the agreements it powers.

ii. Trust in the Consensus

After looking at the software, we next need to judge the trustworthiness of the consensus mechanism implemented by the software. Regardless of what some more fervent advocates of these new technologies may say, no system is truly “trustless.” No system relies purely on “math” or “cryptography” to ensure that the agreement reached by the network is in any way just or perfect. Instead, these systems are designed to be trust-minimizing, designed to rely as little as possible on the honesty of the network’s participants, usually by making deceptive or fraudulent participation go against the economic interests of the participants. So, aside from being open or closed, we can also discuss how each category of consensus mechanism attempts to minimize trust.

In proof-of-work and proof-of-stake systems, so long as we believe that the participants who together control a simple majority of the total computational power on the network (for proof-of-work) or the staked token value on the network (for proof-of-stake) are behaving honestly, then the network’s decision rules will work as intended. The need for trust in the network’s participants is obviated so long as half its participants are not united in trying to attack it. If a dishonest party or parties assumes control of a simple majority of the computational power or staking ability on the network, then they can effectively control the outcome of all decision rules, and the results may differ substantially from the expectations of honest participants.

To take Bitcoin as an example, a party with majority control of the network’s total computational power could: (1) refuse to put certain transactions into the shared ledger indefinitely, (2) consistently favor her own transactions over others in the speed with which they are recorded in the ledger, and (3) periodically rearrange the ledger’s order going back as far in history as she has had the majority of power on the network.60 She cannot, however, violate the automatic rules on the network: she cannot spend other people’s bitcoins, nor can she create more bitcoins than would normally be allowed under the monetary policy rules of the software. By sending messages that violate these automatic rules, she loses compatibility with the network and ceases to take part in the consensus mechanism that enforces decision rules like transaction order.

So in proof-of-work and proof-of-stake systems, we can generally trust that the shared computation is valid and fair so long as we believe it is cost-prohibitive for a malicious actor to amass sufficient computing power or staked tokens to have a majority on the network.

Proof-of-stake systems still lack a robust working prototype. The most notable system, Peercoin, suffered a spate of attacks and reverted to a state where the developers created a whitelist of permissible stakers (effectively a consortium model).61 Some theorize that a robust proof-of-stake consensus mechanism is an impossible goal, but considering that is beyond the scope of this report.62

The availability of what is called “forking”63 adds an additional wrinkle to the question of trust in networks that utilize open consensus mechanisms. If two or more factions of users on the network fail to reach an agreement over what we have called “automatic rules,” then the network will divide in two or more parts. They will share a computational history up until this impasse but, from the time that one faction chooses to alter their software’s automatic rules onward, they will forge new and distinct futures. This has been the case in several so-called hard forks of cryptocurrency networks.64

To understand the trust implications of hard forks, we need an example. According to an automatic rule in the bitcoin consensus mechanism, which we’ll call the supply rule, there can only ever be 21 million bitcoins.65 This hard limit in the code forms the basis of bitcoin’s value proposition: you are willing to hold and trade these otherwise made-up tokens for real goods because their supply is known to be finite. With supply fixed, any demand from a community of users will result in a positive price. If we choose to trust Bitcoin’s long term valuation, we’ll have to worry about fluctuations in demand affecting the price, but at least we won’t need to worry about an increase in supply diluting the value of our holdings with inflation. The effect of the supply rule is to Bitcoin’s value as the effect of the earth is to the value of gold when it resists gold-mining.

While it has never happened, we could imagine a fork of Bitcoin where part of the network wants to increase the total supply of bitcoins from 21 to 42 million by changing that automatic rule. We’ll call the more-bitcoins partisans KeynesCoiners, and the rest of the users we’ll call MiltonBitters. As soon as the KeynesCoiners update their software to incorporate a change in the supply rule, transactions and blocks from a KeynesCoin computer are invalid when received by a MiltonBit machine and vice versa. Both sides of the network recognize a common history of bitcoin transactions, but going forward they will have irreconcilable futures. If you held bitcoins before the fork, you now have bitcoin balances on both networks (because they share a common history before the fork), and you can run KeynesCoin software on one computer while running MiltonBit on another in order to move your bitcoins on either or both sides of the newly forked network.